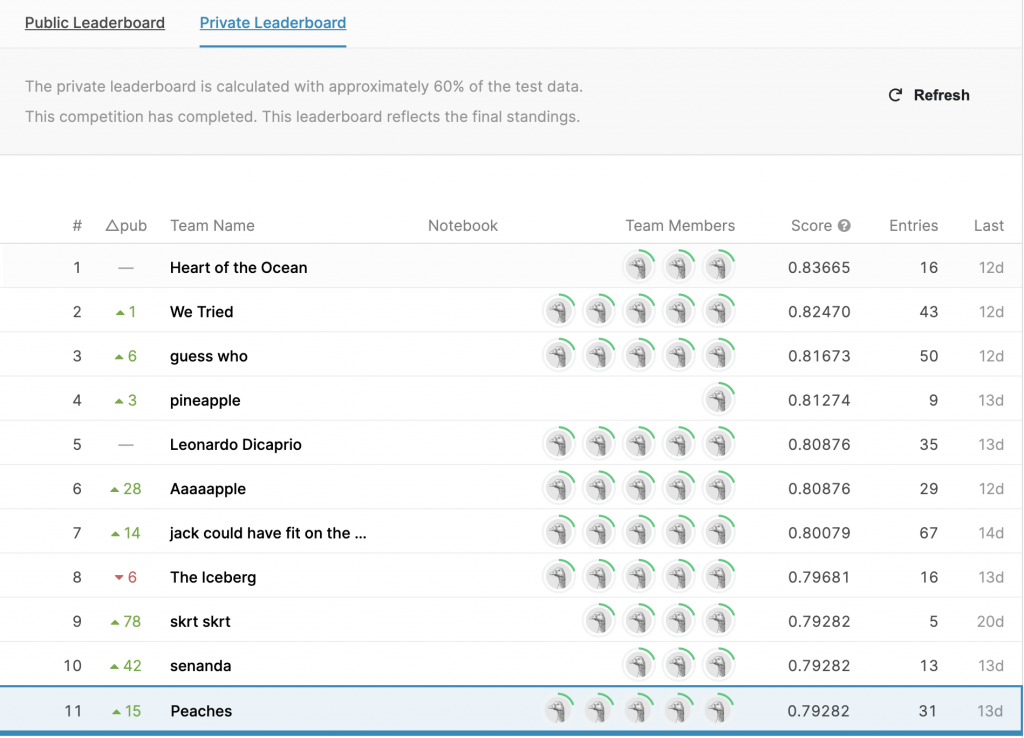

Working with a team of data scientists, I acquired capabilities to speak coherently about ideas, listen and learn from peers, and expand my toolsets to achieve goals. We created a classification algorithm that performed as one of the top teams in the private leaderboard by taking terms to code, iterate through strategies, and trial and error.

The Challenge

The sinking of the Titanic is one of the most infamous shipwrecks in history.

On April 15, 1912, during her maiden voyage, the widely considered “unsinkable” RMS Titanic sank after colliding with an iceberg. Unfortunately, there weren’t enough lifeboats for everyone onboard, resulting in the death of 1502 out of 2224 passengers and crew.

While there was some element of luck involved in surviving, it seems some groups of people were more likely to survive than others.

In this challenge, we ask you to build a predictive model that answers the question: “what sorts of people were more likely to survive?” using passenger data (ie name, age, gender, socio-economic class, etc).

The Process

We started with feature engineering and learned that there are a lot of missing values in the Age category. To fill in the missing value in Age, we use the average Age from grouping Gender and Pclass. We used several neural network algorithms to train with several different combinations of features along with Age and learned that Age is not a very good indicator for survivability. Simply selecting Sex, and Pclass with neural network provides an accuracy of 77.844%. Sex, Pclass, and Parch with neural networks offer an accuracy of 79.041%. We wanted to explore the possibility of transformation by transforming Age and fare with log and z-score, but accuracy decreased to 76% to 77% range.

Simply using feature engineering fail to increase the accuracy. Thus, we started exploring various ensemble methods.

Ensemble with a majority:

We trained four different neural networks selecting different features and making perdition for each model. Using simple combination, we took the majority result as the final prediction and got an accuracy of 79.041%

Model 1: Sex, Pclass, with Age grouped into bins

Model 2: Sex, Pclass, with a newly defined feature FamilySize (FamilySize = Sibsp + Parch +1)

Model 3: Sex, Pclass, FamilySize, and Age_bin

Model 4: Sex, Pclass, FamilySize, and fare grouped into bins

Thus far, we have only used neural networks to classify. Therefore, we trained a random forest which is an ensemble of decision trees using the bagging method.

Model 5: A random forest algorithm using the same feature set from Model 4, resulting in an accuracy of 79.640%

Model 6: Building onto our first simple combination ensemble with a majority vote, we created a new simple combination ensemble using the previous 4 models with 4 random forests, and 1 decision tree. This resulted in an accuracy of 79.401%

Other approaches:

Model 7: Using K-nearest neighbors and looking at family and friends derived from Fare, Ticket, and Names to predict survival based on the known survival of death of one person in that group. This resulted in an accuracy of 80.0239%

Model 8: By creating a women-children group identified through Names, Tickets, and Fares from the combined testing and training dataset, we classify unknown survivals from the known survival or death of a member within their women-children group. Using the xgbclassifier for gradient boosting, the accuracy is 80.0239%

Finally, we use a simple combination of all the models used previously with heavier weights to Model 7 and 8 to obtain the final prediction, resulting in an accuracy score of 80.0239% on the public leaderboard and 79.282% on the private leaderboard.

Discussion

We found that grouping women and children who were likely traveling together and classifying survival based on the survival of one of these people in each group was a good indicator of whether someone survived. We first combined the test and training data set for groups traveling together and used last name and fare. We dropped the adult males (since adult males were the least likely to survive) using title indicators from their names to isolate the women and children. We also grouped by family and friends, using ticket and fare, and a similar method to the women and children model where if one person survives, then predicting the whole group survived and using the k-nearest neighbors clustering model.

We ran into many issues with overfitting, which we figured out that age wasn’t the best predictor of survival. That helped us balance a useful complex model and a simple enough model to apply to the test set.

We learned that if a person was a woman or child in the same family and one of their family members survived, they were more likely to have survived as well.

Colleague

In this 2-week Kaggle competition for the Data Mining course at UC Berkeley, I worked with Anna Pi, Haoyun Hong, Amelia Li, Michelle Wang.